Intro

Sometimes you start building something just to test a stack. And then it grows into something bigger than you expected.

Building fast is easy. Building fast without losing control is not.

Librus started almost by accident. I wanted to build something real using everything modern at once — App Router, SSR done properly, React Query hydration, Supabase Auth, Realtime, Storage, RLS. Not a demo. Not a tutorial project. Something that behaved like a product.

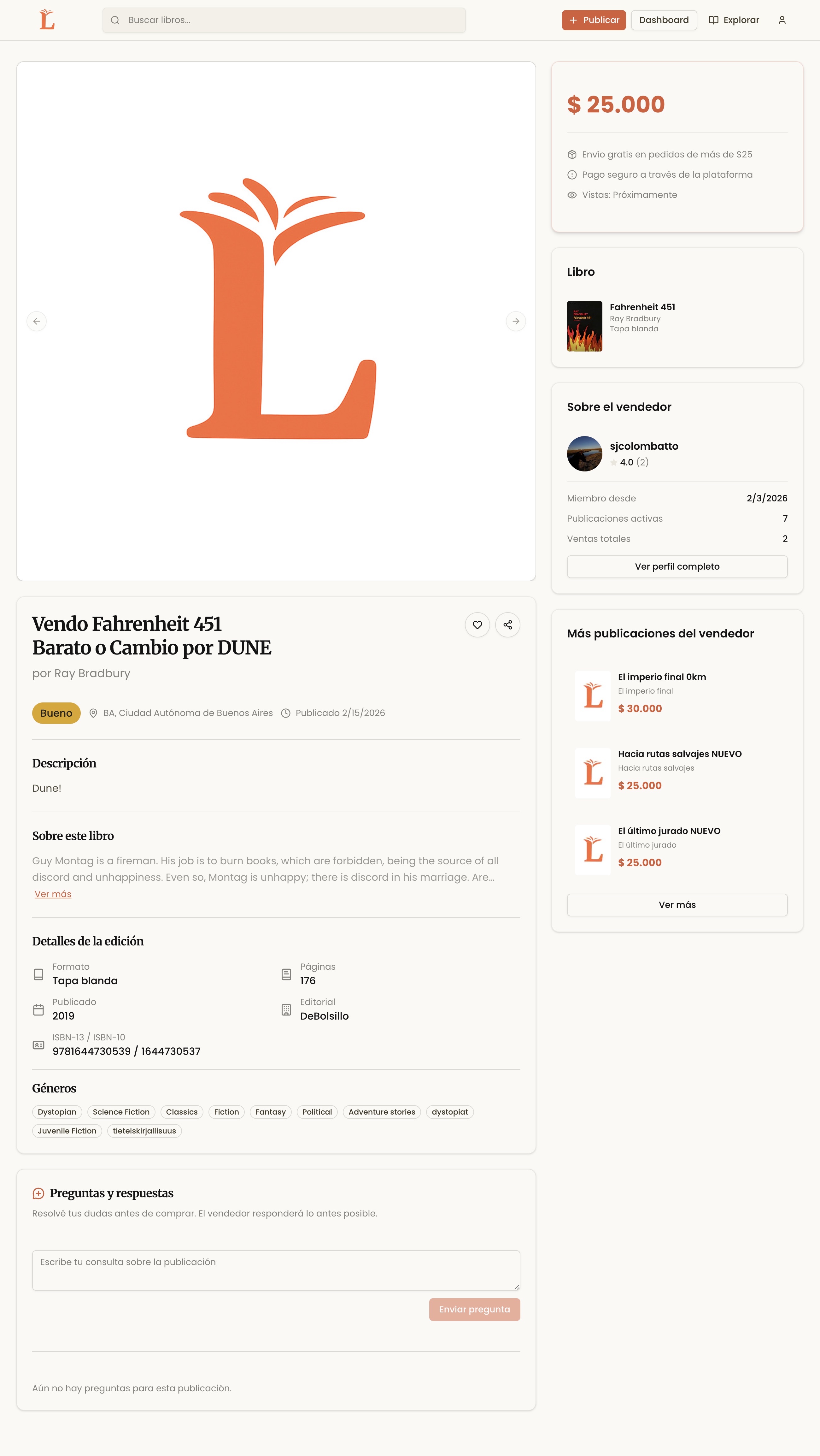

The idea of books came from closer to home. My partner is deep into the reading world — bookstagram, reading clubs, people who trade books, annotate them, collect them. It's a surprisingly intense niche. And if you think about it, almost everyone has books at home collecting dust. Even people who don't read much still have a shelf somewhere.

So the thought was simple: what if there was a place to sell or exchange used books, starting small, maybe Buenos Aires, maybe reading clubs? Not Amazon. Not MercadoLibre. Something more niche, more human.

At the beginning, though, it wasn't about the business. It was about the architecture.

It was a private MVP. A research project.

And it got serious faster than I expected.

Before Librus, There Was Clapp

Before Librus, I tried building something adjacent — an app for managing reading clubs. I called it Clapp, short for “Club de Lectura App”. Very creative, I know. Tweet about Clapp

The idea was to centralize events, discussions, schedules, maybe even book tracking for clubs. It sounded simple on paper.

It wasn’t.

It became complex too fast. Too many roles. Organizers. Members. Invited users. Too many states. Upcoming events, past events, confirmations, discussions, notifications. Too many flows. I was trying to solve coordination, communication, scheduling and social interaction all at once.

Technically, I built a lot. APIs, authentication, schemas, integrations. It worked. But it didn’t feel focused.

Librus is different.

It does one thing: allow someone to publish a book and let someone else buy or exchange it.

That constraint makes the architecture cleaner. It forces better decisions.

Maybe the lesson wasn’t about BaaS or SSR.

Maybe it was about scope.

Moving Fast with Supabase

I chose Supabase mostly out of curiosity. I had heard good things. The promise was simple: full Postgres, authentication, storage, realtime, all integrated.

The first thing that I liked was the local setup. You run Docker and suddenly you have an entire backend ecosystem running on your machine. Database, auth server, storage buckets, everything. No external services. No "fake mocks".

Within days, I had authentication working with SSR, image uploads to buckets, and a working realtime chat.

For server-side sessions, I used @supabase/ssr and let it manage cookies at the boundary of Next.js. It feels clean — the same domain, the same lifecycle. No separate backend, no CORS headaches.

export async function createServerSupabaseClient() {

const cookieStore = cookies()

return createServerClient(

process.env.NEXT_PUBLIC_SUPABASE_URL!,

process.env.NEXT_PUBLIC_SUPABASE_ANON_KEY!,

{

cookies: {

getAll: () => cookieStore.getAll(),

setAll: (cookiesToSet) => {

cookiesToSet.forEach(({ name, value, options }) =>

cookieStore.set(name, value, options)

)

},

},

}

)

}

I didn’t have to think about token rotation or httpOnly cookies. It just worked.

That’s the beauty of BaaS. You feel powerful very quickly.

But that speed hides something else: dependency.

And at that stage, I didn't care. I wanted to build.

Modeling the Data

The part that changed everything wasn’t authentication. It was the data model.

At first, I thought: a listing is just a book someone wants to sell. Simple.

But books are not simple.

One book can have multiple editions — hardcover, paperback, different publishers, different ISBNs, even different contributors. I didn’t want to duplicate the "same book" five times just because of format.

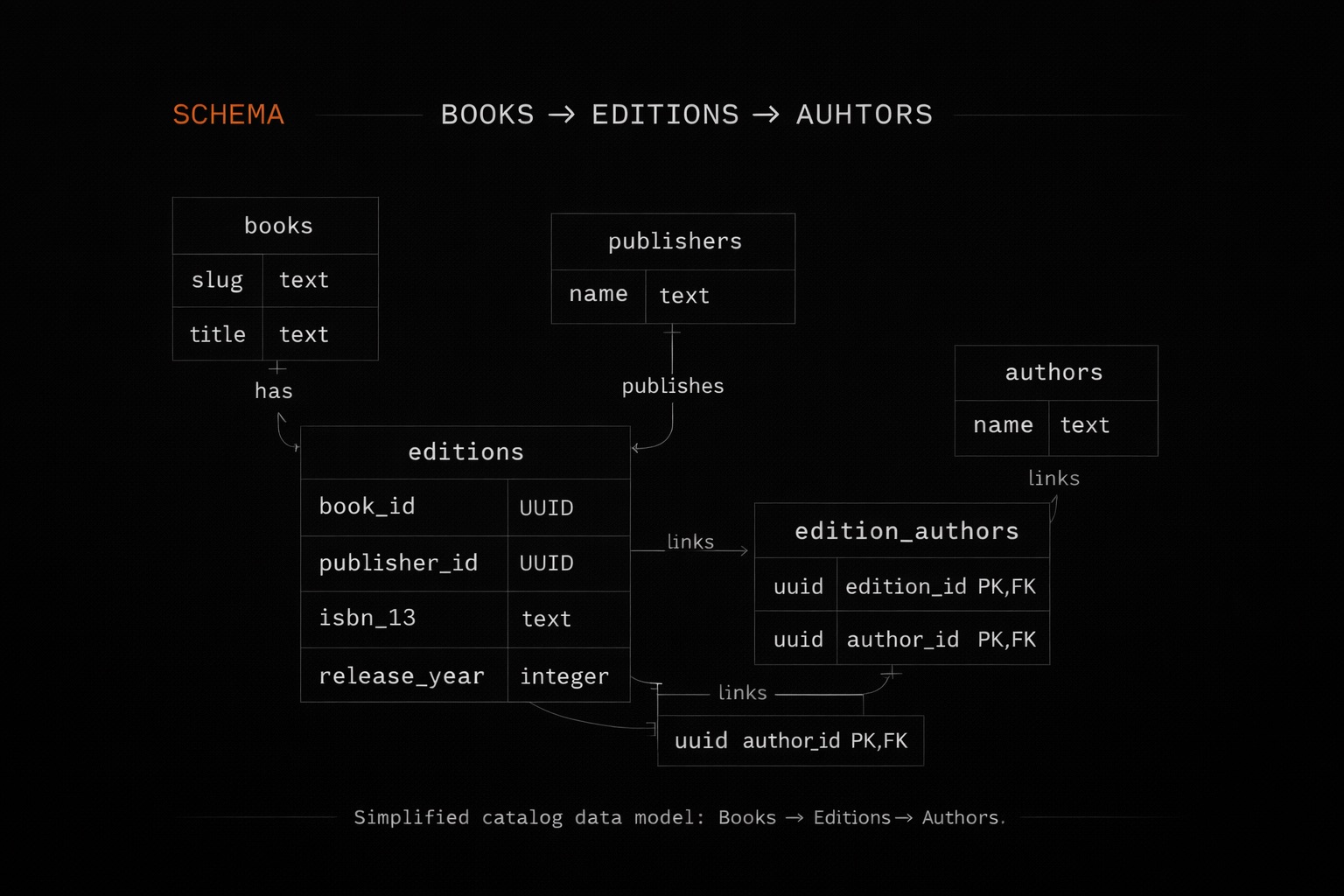

So I separated it:

- A Book as a stable entity.

- An Edition that belongs to a book.

- Authors attached through a many-to-many relation.

It sounds obvious now, but getting that right changes everything. It lets you group listings by book, filter by format, search by author, and avoid chaos in the database.

When someone publishes a book, the system doesn’t just insert a row. It might:

- Upsert a publisher.

- Deduplicate authors.

- Upsert a book.

- Upsert an edition.

- Create the listing.

- Attach images.

- Store trade preferences.

That’s when I realized this wasn’t just a CRUD app anymore. It was a small system.

Performance Without Extra Infrastructure

One of my personal goals was to make the first load feel fast without introducing any external cache.

So I leaned into SSR and React Query hydration.

On server render, I prefetch the data. Then I dehydrate the query client and pass it to the client through a hydration boundary.

const queryClient = new QueryClient()

await queryClient.prefetchQuery({

queryKey: ['listings', filters],

queryFn: () => getListings(filters),

})

return (

<HydrationBoundary state={dehydrate(queryClient)}>

<ListingsPage />

</HydrationBoundary>

)

The first paint comes from the server. The client already has the cache filled. No double fetch. No awkward loading flickers.

I could’ve relied only on App Router’s data fetching, but the moment the app needed real “application behavior” — mutations, cache invalidation, refetch on focus, consistent loading/error states — I wanted a client-side data layer I could reason about. App Router gives me control over the first render and server composition. React Query gives me control over what happens after: how data stays in memory, when it becomes stale, and what needs to be refreshed after an action. SSR for the first paint, React Query for everything that happens next.

That separation also forced me to think about ownership of responsibility. The database owns integrity and relationships. The server owns composition and security. The client owns interaction and short-lived state. When those boundaries blur, complexity explodes.

After that, everything becomes about invalidation.

Too much invalidation and you create loops. Too little and the UI lies.

I had moments where changing filters quickly would trigger overlapping requests. Race conditions appear. The last response isn’t always the last intention. You learn to respect query keys. Not because they're trendy. Because they matter.

This was one of the most valuable parts of the project. Not because it was flashy, but because it forced me to reason about data flow.

Realtime Chat — And the Cost of Convenience

Chat was the most fun and the most revealing part.

Each conversation is canonical — always two users, always deterministically ordered. Messages are persisted via API routes, and Supabase Realtime is only used for instant delivery.

When a message arrives, I update the cache manually with setQueryData. It feels reactive and clean.

But it is tightly coupled.

If tomorrow I decide to replace Supabase Realtime with raw WebSockets or another provider, I would need to rewrite that layer entirely. The transport is deeply integrated. It's not abstracted.

That’s where you start feeling the other side of BaaS.

Speed comes with gravity.

Speed vs Control

Supabase gave me velocity. It gave me confidence. It let me focus on modeling instead of infrastructure.

But it also means:

- Auth is tied to it.

- Realtime is tied to it.

- Storage is tied to it.

- Policies live inside it.

Could I migrate? Yes. It’s still Postgres. That’s the comfort.

Would it be trivial? No.

And that’s the real lesson of this project.

Moving fast is not wrong. In fact, it’s necessary. But you have to understand what you’re coupling yourself to.

Where It Stands Now

Librus is still a private MVP. It lives in development. It’s not live. There are no users yet.

And that’s fine.

It did what it needed to do: it forced me to design something end to end. To think about modeling, SSR, cache invalidation, realtime systems, moderation flows.

You can see it running at:

Maybe it grows into something real. Maybe it stays as a technical exercise.

My thoughts on Speed vs Control and today

Moving fast is not wrong. It’s necessary.

But today, with AI, moving fast is almost too easy. You generate, you connect things, everything works. For a moment, it feels like control.

Then you come back to it.

And it’s messy.

Things are coupled in ways you didn’t fully think through. Layers are mixed. It works, but you don’t really own it.

Speed gives you output. It doesn’t give you clarity.

Architecture still does.

Thanks for reading!